13

March 2019

Soft-deletion is actually pretty hard

In this article, I will primarily focus on one of the most popular options to implement soft deletion in a Ruby on Rails project — acts_as_paranoid, which is based on adding a deleted_at column to every model which needs to support soft deletion. Some alternatives will also be discussed towards the end.

Goals

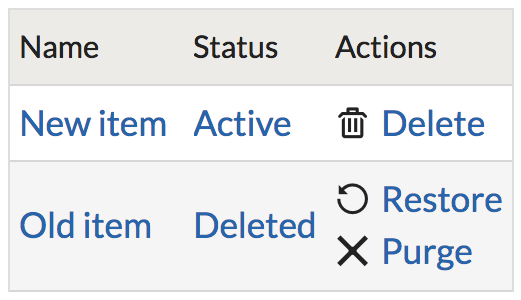

Users have various reasons to want soft deletion, from restoring an object deleted by accident, to recovering from malicious or unauthorized deletion, to recovering an object which was deleted intentionally, but recovering which became necessary due to new circumstances. Some users also want a “recycling bin” or “archival” feature which allows segregating active objects from non-active ones, and soft-deletion is one way of accomplishing this. The capability to delete and restore data needs to be available to end users with the appropriate role, not only ACL administrators or support personnel. Additionally, in order to allow for recovery, soft-deleted objects remain in the system and continue using system resources until permanently deleted.

Implementation approach

Soft deletion strategy

One of the most popular libraries to implement soft deletion is the acts_as_paranoid gem. Its general approach is:

- Add a

deleted_atcolumn to each table which supports soft deletion. Any record where that column has a non-NULLvalue is considered to be soft-deleted. When a record is deleted via Active Record (the default ORM library packaged with Ruby on Rails), instead of actually deleting the record from the database, populate thedeleted_atcolumn with the time of deletion. - Add a default scope to the model supporting soft deletion, so that “normal” queries would exclude records marked as deleted, making deleted records (mostly) invisible to the application.

- Provide special methods to interact with soft-deleted records, including:

.with_deletedand.only_deleted— to look up soft-deleted records,.destroy_fullyto permanently delete a soft-deleted record, and.recoverto restore soft-deleted records (by nullifying theirdeleted_atfield.

Recovery strategy

With any soft deletion approach, one needs to balance the desire of some customers of allowing object recovery (including those objects which were “permanently” deleted), with the desire of other customers to make permanent deletion, in fact, irrecoverable. A form of the omnipotence paradox, one cannot fully support both at the same time.

One approach is a role-based deletion capability, where some users (e.g. administrators) have the capability to irrecoverably delete an object, while other users may only soft-delete. This limits the challenge space, but not completely eliminates it, since even system administrators might want to recover objects “permanently” deleted by other administrators.

Another approach is time-based garbage collection, where all objects are soft-deleted (and placed in the “recycle bin”) for a pre-determined amount of time, during which they may be recovered. Any objects not recovered during this time will be permanently deleted by the system. Basecamp and Ruby on Rails founder David Heinemeier Hansson recently discussed this approach, emphasizing the importance of eventual permanent deletion.

Technical challenges

The approach provided by acts_as_paranoid is simple to understand and implement, and provides quick soft deletion functionality. It is therefore quite popular, especially in smaller applications. However, the approach of implicit soft deletion has some drawbacks, which become more serious as your application grows in complexity.

Implicit default scope

The default scope approach used by acts_as_paranoid has been consistently criticized as an anti-pattern by many Rails developers all the way back to 2013, 2014, and 2015. Some of the criticism (such as impacting model initialization) doesn’t apply to acts_as_paranoid. However, the general implicitness of modifying default queries generated by the system can have unexpected side effects, such as:

- Dissonance between Rails ORM and raw SQL. As your application grows, you may find it necessary to write raw SQL in specific areas, for performance or flexibility not provided by Active Record. Any such queries would need to be manually adjusted to account for soft deletion logic, which may come as a surprise for developers accustomed to the default scope magically working thanks to acts_as_paranoid.

- Conflict with other gems. Some gems, from state machines to logging libraries, also generate and use scopes. They may internally call

.unscoped(for their own purposes), which would have the side effect of undoing acts_as_paranoid’s work. Alternatively, they may actually not need to be impacted by acts_as_paranoid (in case their business logic requires working with all records, even soft-deleted ones), and not expect this behaviour. An example is a cloning library such as deep_clonable used to support deep-coping a set of records — if your business logic requires that soft-deleted records are also copied, the gem may need to be adjusted to be able to detect them. - Side effects in callbacks. Sometimes your application may need to interact with soft-deleted records (e.g. during deep-copying of a parent object), rather than always ignoring them. Any callbacks your models use would need to customized on a case-by-case basis to ensure they properly work with soft-deleted objects. For example, a callback performing internal application logging should likely run for soft-deleted objects, while a callback that sends users email notifying about a state change in an object is something you probably don’t want to run for soft-deleted objects, since from the point of view of the user, the object has been deleted and thus should not appear in their inbox.

In short, acts_as_paranoid globally mutates all interactions with any model it works with, and any implicit global mutation has risks of side effects. These issues can be surmounted with careful curation of first- and third-party code, ensuring every piece is kept in sync and guarded against side effects. Custom code (such as targeted use of .unscoped) may also need to be used to mitigate unintended side effects of the implicit behaviour. As your application grows in size and complexity, you may find yourself chasing more and more side effects, and the cost-to-benefit ratio of an implicit approach may gradually worsen.

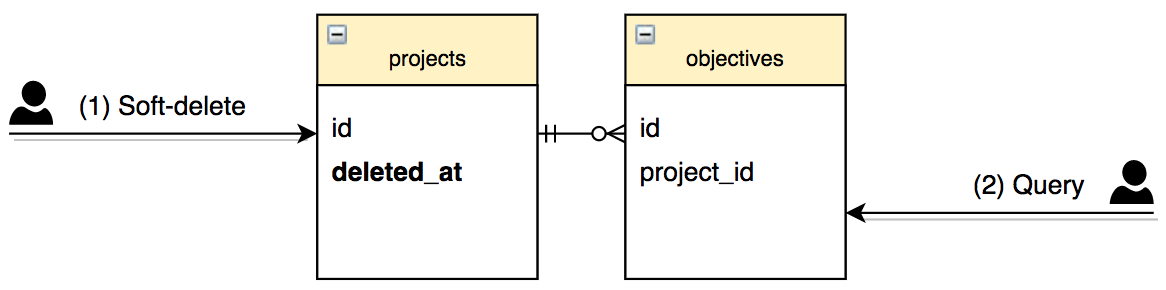

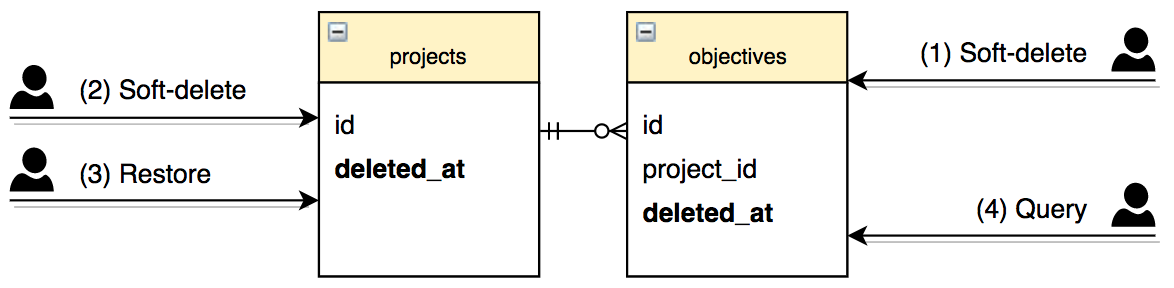

Hierarchical relationships

Most applications have some kind of semantic hierarchy in some of their models. For example, our Projects module has “projects” models containing many “objectives”, which in turn contain many “controls”. If we needed to support soft-deleted on all these models, our business logic would imply that soft-deleting a project should also make all its objectives appear soft-deleted (but not vice versa), and soft-deleting an objective should make all of its controls appear soft-deleted. Some objects have more than one parent, further adding to the complexity.

The choice, therefore, is whether to store the deleted_at column on all the models, or only the parent one. Both approaches have drawbacks. Suppose we only store the soft-deleted flag on the project. If any part of your code directly accesses a control (ranging from a user request to delayed job worker), your code now needs to check whether the control should be “implicitly” treated soft-deleted because its project has been soft-deleted. This would need to be custom logic not provided by acts_as_paranoid, since the control does not have its own deleted_at flag (so a normal Active Record query would load it), and acts_as_paranoid is not aware of your application hierarchy or business logic rules. If you use an authorization gem such as Cancancan, it may be configurable to detect this by setting appropriate conditions in your ability file, but that too is something you will need to implement and maintain manually, rather than magically getting it simply by using acts_as_paranoid.

deleted_at is not synchronized on dependent models, the query in step 2 needs to be manually constructed to avoid loading the dependent model when the parent is deleted.Alternatively, you may choose to add the deleted_at column to the dependent models as well, which will be automatically populated if the parent model has configured dependent: :destroy, avoiding the issues discussed in the previous paragraph. However, this approach is also not without drawbacks. If you use any custom SQL to delete records, or if you rely on database-level ON DELETE CASCADE(rather than dependent: :destroy) for performance or other reasons, the dependent objects’ deleted_at will not automatically be populated if the parent is deleted. You may need to write custom SQL or database triggers to handle this, adding more complexity and maintenance burden.

Furthermore, consider the following sequence of events: (1) the dependent object is soft-deleted, (2) its parent object is soft-deleted, and (3) the parent object is restored. Should the dependent object be restored or not? One could argue it shouldn’t — because it has been explicitly deleted before the parent — but how will you differentiate this sequence from the one where step (1) didn’t happen? It’s not possible if there is only one deleted_at column per table, since the database won’t track the difference in explicit vs. cascade deletion. This might be solvable by adding different database flags — such as deleted_at vs cascade_deleted_at, but again, this adds extra complexity, and also goes beyond the scope of acts_as_paranoid gem (which would then itself need to be modified to check both columns to determine whether a record has been deleted.

deleted_at is synchronized on dependent models, deleting and restoring the parent model may lose the history of whether the dependent has been explicitly deleted earlier (step 1) or not.Validations and constraints

Soft-deleted records also come with validation challenges. For example, suppose your business logic enforces a uniqueness constraint on a project name. Should the constraint include deleted objects? If so, a name that was previously used for a soft-deleted object will be unavailable for future use, which may come as a surprise for a user who does not see soft-deleted projects, and questions why their chosen name is unavailable. If your constraint is enforced at the database level using a unique index, rather than only a Rails validation, then it will apply to deleted records by default (unless you build a partial index which excludes soft-deleted records).

On the other hand, if the validation constraint does not cover soft-deleted objects, what will happen if (1) a project has been soft-deleted, (2) a new project with the same name has been created, and (3) the old project is attempted to be restored? Now there is a validation conflict preventing the restoration because doing so would introduce a name collision. You would have to bypass the validation (or remove it entirely), or perform custom logic such as renaming the old project to have an “(old)” suffix in the end of its name.

Performance and scalability

An important issue to consider is the performance and scalability impact soft-deleted records have on your system. Even though such records may be invisible to most users, they remain in the same database as your other records, and (at minimum) will continue taking up space. If deleted records are included in your indexing (which will be the default behaviour, unless you make partial indexes which exclude them), then any such indexes will impact your write latency as your data set grows, which will be particularly impactful if you expect a large deleted-to-active ratio (e.g. if you use soft deletion as a means of archival functionality).

On the other hand, if your indexes exclude deleted records, then you will not be able to benefit from them in any part of the code which actually needs to look them up (such as cloning or logging frameworks which need to work with all records, including soft-deleted ones).

Finally, if your application enforces usage quotas for end users, you will need to decide whether soft-deleted records count against the quota. If so, as your users soft-delete records, they will have less room for other records. If not, then you may potentially end up with more disk or other system resource usage than you anticipated the quotas will allow for, impacting your system performance, your operational costs, or both.

Security

While soft deletion using a column flag can mitigate malicious deletion of data, that could also be accomplished by modifying an existing record to wipe or corrupt its fields, and the latter approach would not be solvable by soft deletion. An audit trail mechanism tracking changes to your data is a better fit to detect unauthorized or erroneous changes.

Furthermore, in web applications at least, data theft (which cannot be reversed once occurred) is generally a more severe risk than data loss or corruption (which could be restorable from database backup), and soft deletion does not mitigate this. As such, using soft deletion as a security mechanism only works in a narrow range of cases, and should be complemented by other techniques.

The goal of this analysis is not to discourage the use of acts_as_paranoid in a blanket way — using it has the clear advantage of initial simplicity and speed to production, compared to other approaches. But if you use it, you should have a plan to address its limitations as your application grows and evolves.

Alternatives

Export and re-import

Other than the in-database approach provided by acts_as_paranoid, you may consider other alternatives to soft deletion. You might export deleted objects (to CSV, JSON, SQL dump, or other format) and store them on S3 or elsewhere. Removing deleted items from the database may address some performance and scalability concerns.

However, any custom import and export logic would likely be more complex than a simple database deleted_at flag, while still needing to handle the same challenges of referential integrity, hierarchical relationships, and other business logic rules. Some of the challenges may be even more complex — for example, if your schema was migrated after an export, you’d need to ensure the exported data from the old schema can be imported back later.

Event sourcing

Another approach is event sourcing. An event sourcing database is a write-only, immutable database that records all events as transactions — modifying or deleting records instead creates a new transaction (much like in accounting, where reversing a transaction makes a new journal entry, rather than erase the previous one). In an event sourcing database, one would not need to manually implement soft deletion, since an old version could always be restored from an earlier revision.

Unfortunately, while event sourcing models have been popular in some technologies (in particular, version control systems like Git, or wikis like MediaWiki used by Wikipedia, all treat changes as revisions and natively support version history and reverts), they have yet to become mainstream in relational databases. Some proprietary event sourcing databases exist, but none, for example, have become so popular as to be supported by Amazon RDS.

Source: https://medium.com