This is especially true for sitemaps, which are almost as old as SEO itself.

The problem is, when every man and their dog has posted answers in forums, published recommendations on blogs and amplified opinions with social media, it takes time to sort valuable advice from misinformation.

So while most of us share a general understanding that submitting a sitemap to Google Search Console is important, you may not know the intricacies of how to implement them in a way that drives SEO key performance indicators (KPIs).

Let’s clear up the confusion around best practices for sitemaps today.

In this article we cover:

- What is an XML sitemap

- XML sitemap format

- Types of sitemaps

- XML sitemap indexation optimization

- XML sitemap best practice checklist

What Is an XML Sitemap

In simple terms, an XML sitemap is a list of your website’s URLs. It acts as a roadmap to tell search engines what content is available and how to reach it.

In the example above, a search engine will find all nine pages in a sitemap with one visit to the XML sitemap file.

On the website, it will have to jump through five internal links to find page 9.

This ability of an XML sitemap to assist crawlers in faster indexation is especially important for websites that:

- Have thousands of pages and/or a deep website architecture.

- Frequently add new pages.

- Frequently change content of existing pages.

- Suffer from weak internal linking and orphan pages.

- Lack a strong external link profile.

Side note: Submitting a sitemap with noindex URLs can also speed up deindexation. This can be more efficient than removing URLs in Google Search Console if you have many to be deindexed. But use this with care and be sure you only add such URLs temporarily to your sitemaps.

Key Takeaway

Even though search engines can technically find your URLs without it, by including pages in an XML sitemap you’re indicating that you consider them to be quality landing pages.

While there is no guarantee that an XML sitemap will get your pages crawled, let alone indexed or ranked, submitting one certainly increases your chances.

XML Sitemap Format

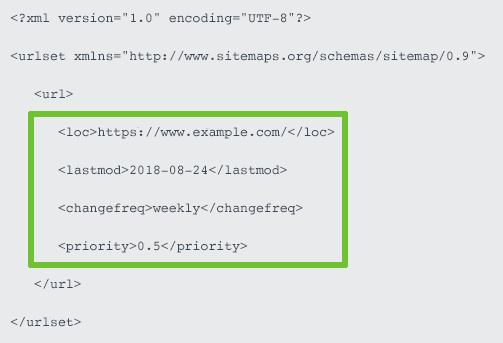

A one-page site using all available tags would have this XML sitemap:

But how should an SEO use each of these tags? Is all the metadata valuable?

Loc (a.k.a. Location) Tag

This compulsory tag contains the absolute, canonical version of the URL location. It should accurately reflect your site protocol (http or https) and if you have chosen to include or exclude www.

For international websites, this is also where you can implement your hreflang handling. By using the xhtml:link attribute to indicate the language and region variants for each URL, you reduce page load time, which the other implementations of link elements in the <head> or HTTP headers can’t offer.

Yoast has an epic post on hreflang for those wanting to learn more.

Lastmod (a.k.a. Last Modified) Tag

An optional but highly recommended tag used to communicate the file’s last modified date and time.

John Mueller acknowledged Google does use the lastmod metadata to understand when the page last changed and if it should be crawled. Contradicting advice from Illyes in 2015.

The last modified time is especially critical for content sites as it assists Google to understand that you are the original publisher.

It’s also powerful to communicate freshness, but be sure to update modification date only when you have made meaningful changes.

Trying to trick search engines that your content is fresh, when it’s not, may result in a Google penalty.

Changefreq (a.k.a. Change Frequency) Tag

Once upon a time, this optional tag hinted how frequently content on the URL was expected to change to search engines.

But Mueller has stated that “change frequency doesn’t really play that much of a role with sitemaps” and that “it is much better to just specify the time stamp directly”.

Priority Tag

This optional tag that ostensibly tells search engines how important a page is relative to your other URLs on a scale between 0.0 to 1.0.

At best, it was only ever a hint to search engines and both Mueller and Illyes have clearly stated they ignore it.

Key Takeaway

Your website needs an XML sitemap, but not necessarily the priority and change frequency metadata.

Use the lastmod tags accurately and focus your attention on ensuring you have the right URLs submitted.

Types of Sitemaps

There are many different types of sitemaps. Let’s look at the ones you actually need.

XML Sitemap Index

XML sitemaps have a couple of limitations:

- A maximum of 50,000 URLs.

- An uncompressed file size limit of 50MB.

Sitemaps can be compressed using gzip (the file name would become something similar to sitemap.xml.gz) to save bandwidth for your server. But once unzipped, the sitemap still can’t exceed either limit.

Whenever you exceed either limit, you will need to split your URLs across multiple XML sitemaps.

Those sitemaps can then be combined into a single XML sitemap index file, often named sitemap-index.xml. Essentially, a sitemap for sitemaps.

For exceptionally large websites who want to take a more granular approach, you can also create multiple sitemap index files. For example:

- sitemap-index-articles.xml

- sitemap-index-products.xml

- sitemap-index-categories.xml

But be aware that you cannot nest sitemap index files.

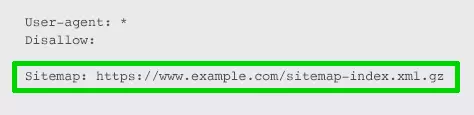

For search engines to easily find every one of your sitemap files at once, you will want to:

- Submit your sitemap index(es) to Google Search Console and Bing Webmaster Tools.

- Specify your sitemap index URL(s) in your robots.txt file. Pointing search engines directly to your sitemap as you welcome them to crawl.

You can also submit sitemaps by pinging them to Google.

But beware:

Google no longer pays attention to hreflang entries in “unverified sitemaps”, which Tom Anthony believes to mean those submitted via the ping URL.

XML Image Sitemap

Image sitemaps were designed to improve indexation of image content.

In modern day SEO, however, images are embedded within page content, so will be crawled along with the page URL.

Moreover, it’s best practice to utilize JSON-LD schema.org/ImageObject markup to call out image properties to search engines as it provides more attributes than an image XML sitemap.

Because of this, an XML image sitemap is unnecessary for most websites. Including an image sitemap would only waste crawl budget.

The exception to this is if images help drive your business, such as a stock photo website or ecommerce site gaining product page sessions from Google Image search.

Know that images don’t have to be on the same domain as your website to be submitted in a sitemap. You can use a CDN as long as it’s verified in Search Console.

XML Video Sitemap

Similar to images, if videos are critical to your business, submit an XML video sitemap. If not, a video sitemap is unnecessary.

Save your crawl budget for the page the video is embedded into, ensuring you markup all videos with JSON-LD as a schema.org/VideoObject.

Google News Sitemap

Only sites registered with Google News should use this sitemap.

If you are, include articles published in the last two days, up to a limit of 1,000 URLs per sitemap, and update with fresh articles as soon as they’re published.

Contrary to some online advice, Google News sitemaps don’t support image URL.

Google recommends to use schema.org image or og:image to specify your article thumbnail for Google News.

Mobile Sitemap

This is not needed for most websites.

Why? Because Mueller confirmed mobile sitemaps are for feature phone pages only. Not for smartphone-compatibility.

So unless you have unique URLs specifically designed for featured phones, a mobile sitemap will be of no benefit.

HTML Sitemap

XML sitemaps take care of search engine needs. HTML sitemaps were designed to assist human users to find content.

The question becomes, if you have a good user experience and well crafted internal links, do you need a HTML sitemap?

Check the page views of your HTML sitemap in Google Analytics. Chances are, it’s very low. If not, it’s a good indication that you need to improve your website navigation.

HTML sitemaps are generally linked in website footers. Taking link equity from every single page of your website.

Ask yourself. Is that the best use of that link equity? Or are you including an HTML sitemap as a nod to legacy website best practices?

If few humans use it. And search engines don’t need it as you have strong internal linking and an XML sitemap. Does that HTML sitemap have a reason to exist? I would argue no.

Dynamic XML Sitemap

Static sitemaps are simple to create using a tool such as Screaming Frog.

The problem is, as soon as you create or remove a page, your sitemap is outdated. If you modify the content of a page, the sitemap won’t automatically update the lastmod tag.

So unless you love manually creating and uploading sitemaps for every single change, it’s best to avoid static sitemaps.

Dynamic XML sitemaps, on the other hand, are automatically updated by your server to reflect relevant website changes as they occur.

To create a dynamic XML sitemap:

- Ask your developer to code a custom script, being sure to provide clear specifications

- Use a dynamic sitemap generator tool

- Install a plugin for your CMS, for example the Yoast SEO plugin for WordPress

Key Takeaway

Dynamic XML sitemaps and a sitemap index are modern best practice. Mobile and HTML sitemaps are not.

Use image, video and Google News sitemaps only if improved indexation of these content types drive your KPIs.

XML Sitemap Indexation Optimization

Now for the fun part. How do you use XML sitemaps to drive SEO KPIs.

Only Include SEO Relevant Pages in XML Sitemaps

An XML sitemap is a list of pages you recommend to be crawled, which isn’t necessarily every page of your website.

A search spider arrives at your website with an “allowance” for how many pages it will crawl.

The XML sitemap indicates you consider the included URLs to be more important than those that aren’t blocked but aren’t in the sitemap.

You are using it to tell search engines “I’d really appreciate it if you’d focus on these URLs in particular.”

Essentially, it helps you use crawl budget effectively.

By including only SEO relevant pages, you help search engines crawl your site more intelligently in order to reap the benefits of better indexation.

You should exclude:

- Non-canonical pages.

- Duplicate pages.

- Paginated pages.

- Parameter or session ID based URLs.

- Site search result pages.

- Reply to comment URLs.

- Share via email URLs.

- URLs created by filtering that are unnecessary for SEO.

- Archive pages.

- Any redirections (3xx), missing pages (4xx) or server error pages (5xx).

- Pages blocked by robots.txt.

- Pages with noindex.

- Resource pages accessible by a lead gen form (e.g., white paper PDFs).

- Utility pages that are useful to users, but not intended to be landing pages (login page, contact us, privacy policy, account pages, etc.).

I want to share an example from Michael Cottam about prioritizing pages:

Say your website has 1,000 pages. 475 of those 1,000 pages are SEO relevant content. You highlight those 475 pages in an XML sitemap, essentially asking Google to deprioritize indexing the remainder.

Now, let’s say Google crawls those 475 pages, and algorithmically decides that 175 are “A” grade, 200 are “B+”, and 100 “B” or “B-”. That’s a strong average grade, and probably indicates a quality website to which to send users.

Contrast that against submitting all 1,000 pages via the XML sitemap. Now, Google looks at the 1,000 pages you say are SEO relevant content, and sees over 50 percent are “D” or “F” pages. Your average grade isn’t looking so good anymore and that may harm your organic sessions.

But remember, Google is going to use your XML sitemap only as a clue to what’s important on your site.

Just because it’s not in your XML sitemap doesn’t necessarily mean that Google won’t index those pages.

When it comes to SEO, overall site quality is a key factor.

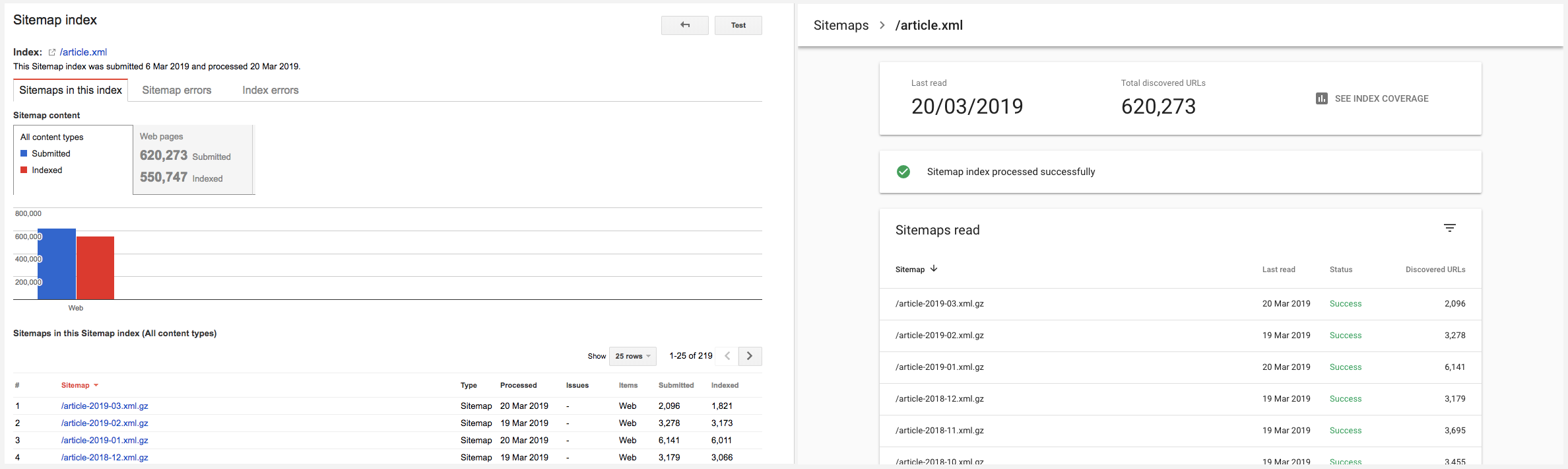

To assess the quality of your site, turn to the sitemap related reporting in Google Search Console (GSC).

Key Takeaway

Manage crawl budget by limiting XML sitemap URLs only to SEO relevant pages and invest time to reduce the number of low-quality pages on your website.

Fully Leverage Sitemap Reporting

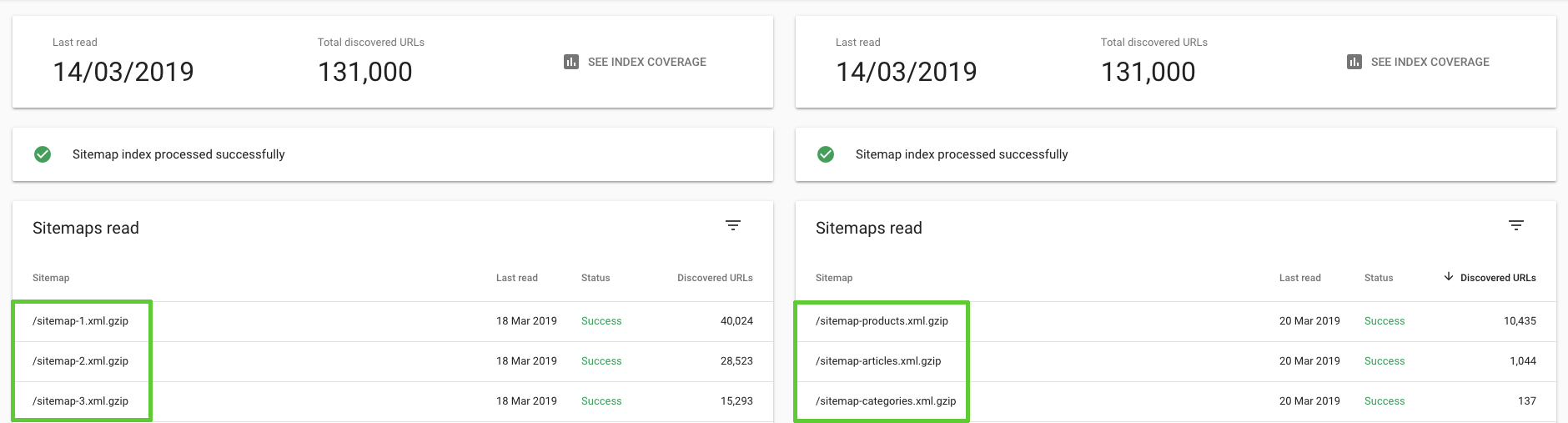

The sitemaps section in the new Google Search Console is not as data rich as what was previously offered.

It’s primary use now is to confirm your sitemap index has been successfully submitted.

If you have chosen to use descriptive naming conventions, rather than numeric, you can also get a feel for the number of different types of SEO pages that have been “discovered” – aka all URLs found by Google via sitemaps as well as other methods such as following links.

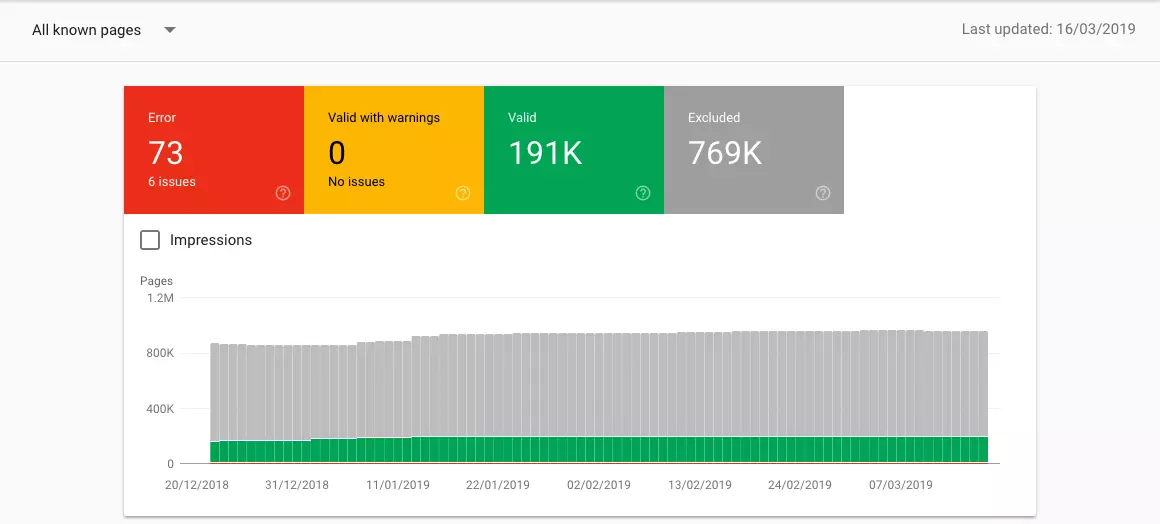

In the new GSC, the more valuable area for SEOs in regard to sitemaps is the Index Coverage report.

The report will default to “All known pages”. Here you can:

- Address any “Error” or “Valid with warnings” issues. These often stem from conflicting robots directives. One solved, be sure to validate your fix via the Coverage report.

- Look at indexation trends. Most sites are continually adding valuable content, so “Valid” pages (aka those indexed by Google) should steadily increase. Understand the cause of any dramatic changes.

- Select “Valid” and look in details for the type “Indexed, not submitted in sitemap”. These are pages where you and Google disagree on their value. For example, you may not have submitted your privacy policy URL, but Google has indexed the page. In such cases, there’s no actions to be taken. What you need to be looking out for are indexed URLs which stem from poor pagination handling, poor parameter handling, duplicate content or pages being accidently left out of sitemaps.

Afterwards, limit the report to the SEO relevant URLs you have included in your sitemap by changing the drop down to “All submitted pages”. Then check the details of all “Excluded” pages.

Reasons for exclusion of sitemap URLs can be put into four action groups:

- Quick wins: For duplicate content, canoncials, robots directives, 40X HTTP status codes, redirects or legalities exclusions put in place the appropriate fix.

- Investigate page: For both “Submitted URL dropped” and “Crawl anomaly” exclusions investigate further by using the Fetch as Google tool.

- Improve page: For “Crawled – currently not indexed” pages, review the page (or page type as generally it will be many URLs of a similar breed) content and internal links. Chances are, it’s suffering from thin content, unoriginal content or is orphaned.

- Improve domain: For “Discovered – currently not indexed” pages, Google notes the typical reason for exclusion as they “tried to crawl the URL but the site was overloaded”. Don’t be fooled. It’s more likely that Google decided “it’s not worth the effort” to crawl due to poor internal linking or low content quality seen from the domain. If you see a larger number of these exclusions, review the SEO value of the page (or page types) you have submitted via sitemaps, focus on optimizing crawl budget as well as review your information architecture, including parameters, from both a link and content perspective.

Whatever your plan of action, be sure to note down benchmark KPIs.

The most useful metric to assess the impact of sitemap optimization efforts is the “All submitted pages” indexation rate – calculated by taking the percentage of valid pages out of total discovered URLs.

Work to get this above 80%.

Why not 100%? Because if you have focussed all your energy on ensuring every SEO relevant URL you currently have is indexed, you likely missed opportunities to expand your content coverage.

Note: If you are a larger website who has chosen to break their site down into multiple sitemap indexes, you will be able to filter by those indexes. This will not only allow you to:

- See the overview chart on a more granular level.

- See a larger number of relevant examples when investigating a type of exclusion.

- Tackle indexation rate optimization section by section.

Key Takeaway

In addition to identifying warnings and errors, you can use the Index Coverage report as an XML sitemap sleuthing tool to isolate indexation problems.

XML Sitemap Best Practice Checklist

Do invest time to:

✓ Include hreflang tags in XML sitemaps

✓ Include the <loc> and <lastmod> tags

✓ Compress sitemap files using gzip

✓ Use a sitemap index file

✓ Use image, video and Google news sitemaps only if indexation drives your KPIs

✓ Dynamically generate XML sitemaps

✓ Ensure URLs are included only in a single sitemap

✓ Reference sitemap index URLs in robots.txt

✓ Submit sitemap index to both Google Search Console and Bing Webmaster Tools

✓ Include only SEO relevant pages in XML sitemaps

✓ Fix all errors and warnings

✓ Analyze trends and types of valid pages

✓ Calculate submitted pages indexation rates

✓ Address causes of exclusion for submitted pages

Now, go check your own sitemap and make sure you’re doing it right.

Source: www.searchenginejournal.com